目标检测Neck:FPN(Feature Pyramid Network)与PAN(附torch代码)

452人参与 • 2024-08-01 • 车联网

文章目录

fpn和pan都是用于解决在目标检测中特征金字塔网络(fpn)在多尺度检测任务上的不足的方法。下面分别详细介绍一下它们的原理和区别。

0. 前言

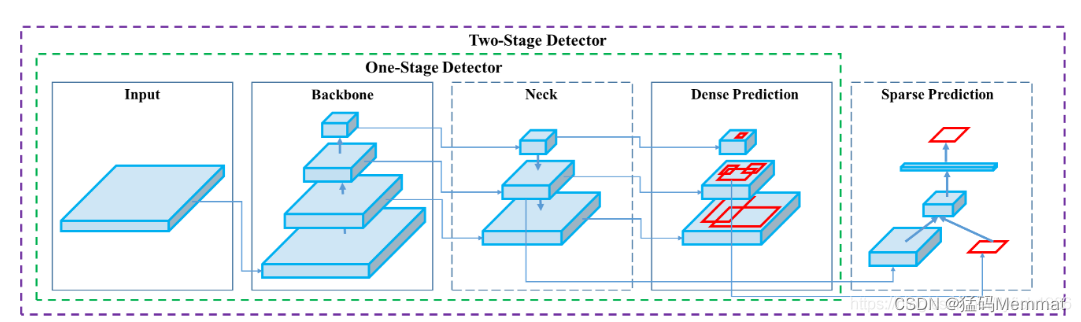

目标检测器的构成

-

input:image,patches,imagepyramid

-

backbones:vgg16,resnet(resnet-18、resnet-34、resnet-50、resnet-101、resnet-152),spinenet,efficientnet-b0/b7,cspresnext50,cspdarknet53,mobilenet(v1、v2、v3),shufflenet(v1、v2) ,ghostnet

-

neck:

additional blocks:spp,aspp,rfb,sam

path-aggregation blocks:fpn,pan,nas-fpn,fully-connectedfpn,bifpn,asff,sfam -

heads:

dense prediction(one-stage):

rpn,ssd,yolo,retinanet(anchorbased)

cornernet,centernet,matrixnet,fcos(fcosv1、fcosv2),atss,paa(anchorfree)

sparseprediction(two-stage):

fasterr-cnn,r-fcn,maskr-cnn(anchorbased)

reppoints(anchorfree)

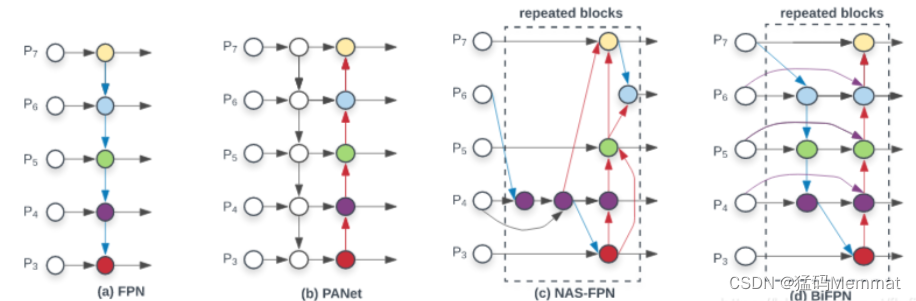

neck部分的设计是多种多样的

(a) fpn

(b) panet

(c) nas-fpn

(d) bifpn

1. fpn

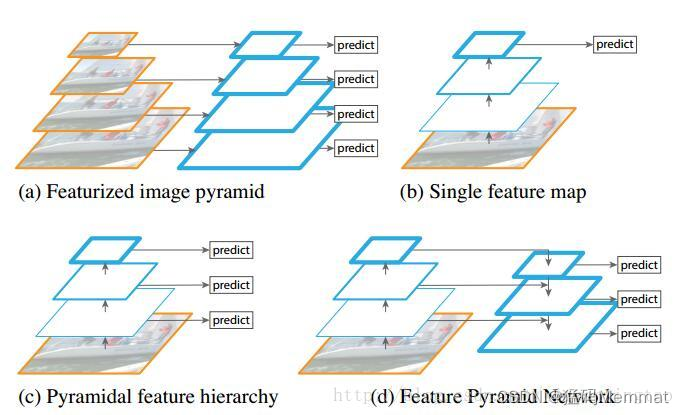

fpn全称feature pyramid network,是由fair在2017年提出的一种处理多尺度问题的方法。fpn的主要思路是通过构建金字塔式的特征图来提取不同尺度下的目标特征,进而提高检测精度。

fpn的构建方式是从高分辨率的特征图开始向下采样,同时从低分辨率的特征图开始向上采样,将它们连接起来形成金字塔。在这个过程中,每一层特征图的信息都会与上下相邻层的特征图融合,这样可以使得高层特征图中的目标信息得以保留,同时低层特征图中的背景信息也可以被高层特征图所补充。经过这样的处理,fpn可以提高模型在多尺度检测任务上的精度,同时还可以在不影响检测速度的情况下提高检测速度。

import collections

import numpy as np

import torch

import torch.nn as nn

import torch.nn.functional as f

from torch.nn import init

from core.config import cfg

import utils.net as net_utils

import modeling.resnet as resnet

from modeling.generate_anchors import generate_anchors

from modeling.generate_proposals import generateproposalsop

from modeling.collect_and_distribute_fpn_rpn_proposals import collectanddistributefpnrpnproposalsop

import nn as mynn

# lowest and highest pyramid levels in the backbone network. for fpn, we assume

# that all networks have 5 spatial reductions, each by a factor of 2. level 1

# would correspond to the input image, hence it does not make sense to use it.

lowest_backbone_lvl = 2 # e.g., "conv2"-like level

highest_backbone_lvl = 5 # e.g., "conv5"-like level

# ---------------------------------------------------------------------------- #

# fpn with resnet

# ---------------------------------------------------------------------------- #

def fpn_resnet50_conv5_body():

return fpn(

resnet.resnet50_conv5_body, fpn_level_info_resnet50_conv5()

)

def fpn_resnet50_conv5_body_bup():

return fpn(

resnet.resnet50_conv5_body, fpn_level_info_resnet50_conv5(),

panet_buttomup=true

)

def fpn_resnet50_conv5_p2only_body():

return fpn(

resnet.resnet50_conv5_body,

fpn_level_info_resnet50_conv5(),

p2only=true

)

def fpn_resnet101_conv5_body():

return fpn(

resnet.resnet101_conv5_body, fpn_level_info_resnet101_conv5()

)

def fpn_resnet101_conv5_p2only_body():

return fpn(

resnet.resnet101_conv5_body,

fpn_level_info_resnet101_conv5(),

p2only=true

)

def fpn_resnet152_conv5_body():

return fpn(

resnet.resnet152_conv5_body, fpn_level_info_resnet152_conv5()

)

def fpn_resnet152_conv5_p2only_body():

return fpn(

resnet.resnet152_conv5_body,

fpn_level_info_resnet152_conv5(),

p2only=true

)

# ---------------------------------------------------------------------------- #

# functions for bolting fpn onto a backbone architectures

# ---------------------------------------------------------------------------- #

class fpn(nn.module):

"""add fpn connections based on the model described in the fpn paper.

fpn_output_blobs is in reversed order: e.g [fpn5, fpn4, fpn3, fpn2]

similarly for fpn_level_info.dims: e.g [2048, 1024, 512, 256]

similarly for spatial_scale: e.g [1/32, 1/16, 1/8, 1/4]

"""

def __init__(self, conv_body_func, fpn_level_info, p2only=false, panet_buttomup=false):

super().__init__()

self.fpn_level_info = fpn_level_info

self.p2only = p2only

self.panet_buttomup = panet_buttomup

self.dim_out = fpn_dim = cfg.fpn.dim

min_level, max_level = get_min_max_levels()

self.num_backbone_stages = len(fpn_level_info.blobs) - (min_level - lowest_backbone_lvl)

fpn_dim_lateral = fpn_level_info.dims

self.spatial_scale = [] # a list of scales for fpn outputs

#

# step 1: recursively build down starting from the coarsest backbone level

#

# for the coarest backbone level: 1x1 conv only seeds recursion

self.conv_top = nn.conv2d(fpn_dim_lateral[0], fpn_dim, 1, 1, 0)

if cfg.fpn.use_gn:

self.conv_top = nn.sequential(

nn.conv2d(fpn_dim_lateral[0], fpn_dim, 1, 1, 0, bias=false),

nn.groupnorm(net_utils.get_group_gn(fpn_dim), fpn_dim,

eps=cfg.group_norm.epsilon)

)

else:

self.conv_top = nn.conv2d(fpn_dim_lateral[0], fpn_dim, 1, 1, 0)

self.topdown_lateral_modules = nn.modulelist()

self.posthoc_modules = nn.modulelist()

# for other levels add top-down and lateral connections

for i in range(self.num_backbone_stages - 1):

self.topdown_lateral_modules.append(

topdown_lateral_module(fpn_dim, fpn_dim_lateral[i+1])

)

# post-hoc scale-specific 3x3 convs

for i in range(self.num_backbone_stages):

if cfg.fpn.use_gn:

self.posthoc_modules.append(nn.sequential(

nn.conv2d(fpn_dim, fpn_dim, 3, 1, 1, bias=false),

nn.groupnorm(net_utils.get_group_gn(fpn_dim), fpn_dim,

eps=cfg.group_norm.epsilon)

))

else:

self.posthoc_modules.append(

nn.conv2d(fpn_dim, fpn_dim, 3, 1, 1)

)

self.spatial_scale.append(fpn_level_info.spatial_scales[i])

# add for panet buttom-up path

if self.panet_buttomup:

self.panet_buttomup_conv1_modules = nn.modulelist()

self.panet_buttomup_conv2_modules = nn.modulelist()

for i in range(self.num_backbone_stages - 1):

if cfg.fpn.use_gn:

self.panet_buttomup_conv1_modules.append(nn.sequential(

nn.conv2d(fpn_dim, fpn_dim, 3, 2, 1, bias=true),

nn.groupnorm(net_utils.get_group_gn(fpn_dim), fpn_dim,

eps=cfg.group_norm.epsilon),

nn.relu(inplace=true)

))

self.panet_buttomup_conv2_modules.append(nn.sequential(

nn.conv2d(fpn_dim, fpn_dim, 3, 1, 1, bias=true),

nn.groupnorm(net_utils.get_group_gn(fpn_dim), fpn_dim,

eps=cfg.group_norm.epsilon),

nn.relu(inplace=true)

))

else:

self.panet_buttomup_conv1_modules.append(

nn.conv2d(fpn_dim, fpn_dim, 3, 2, 1)

)

self.panet_buttomup_conv2_modules.append(

nn.conv2d(fpn_dim, fpn_dim, 3, 1, 1)

)

#self.spatial_scale.append(fpn_level_info.spatial_scales[i])

#

# step 2: build up starting from the coarsest backbone level

#

# check if we need the p6 feature map

if not cfg.fpn.extra_conv_levels 您想发表意见!!点此发布评论

发表评论